Technology Insight – ICT Prototyping Framework for the Supply Chain scenarios

By Italian National Inter-university Consortium of Telecommunications (CNIT)

Introduction

One of the crucial challenges of maritime transport is digitalization through the adoption and implementation of the proper ICT solutions. Development and deployment of such solutions allows seaports to increase their overall efficiency, to lower costs and improve performance times of the intra-terminal operations, to improve information flow and decision-making and finally to decrease the environmental footprint by addressing sustainability challenges. Nevertheless, the digitalization levels are heterogeneous and depend on different factors, such as seaport size, cargo volumes and type, stakeholders’ needs, business models and the amount of investments.

With that said, as ICT innovation is considered the turnkey to enable Industry 4.0 processes and pave the ground to sustainable growth, the Port Network Authority of the North Tyrrhenian Sea (AdSP-MTS) has started a strategic collaboration with the Italian National Inter-university Consortium of Telecommunications (CNIT) to define and implement a Digital Agenda [1] for the port network by supporting technological transfer activities based on adoption of disruptive and cutting-edge technologies (e.g., IoT and distributed monitoring, digital twinning, blockchain and DLTs, 5G technology standard, autonomous driving and C-ITS, AR/VR-based services and applications, etc.).

Reference ICT Stack – The case of Livorno Port

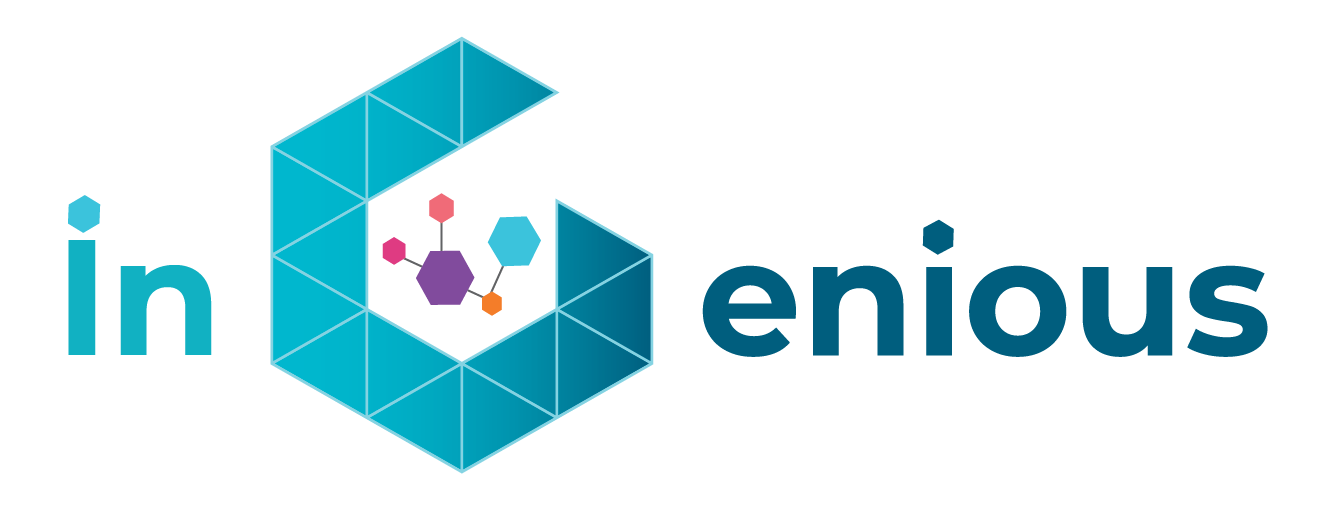

To address new needs and requirements of the Port Community, the application development and services provisioning approach, based on the usage of monolithic and technological solutions difficult to scale, has been descoped. For this reason, CNIT spent a lot of effort in the standardization of an ICT reference stack, known as MONICA Standard Platform (see Figure 1), based on standard and open-source technological solutions to support Livorno Port operational and digital needs [2].

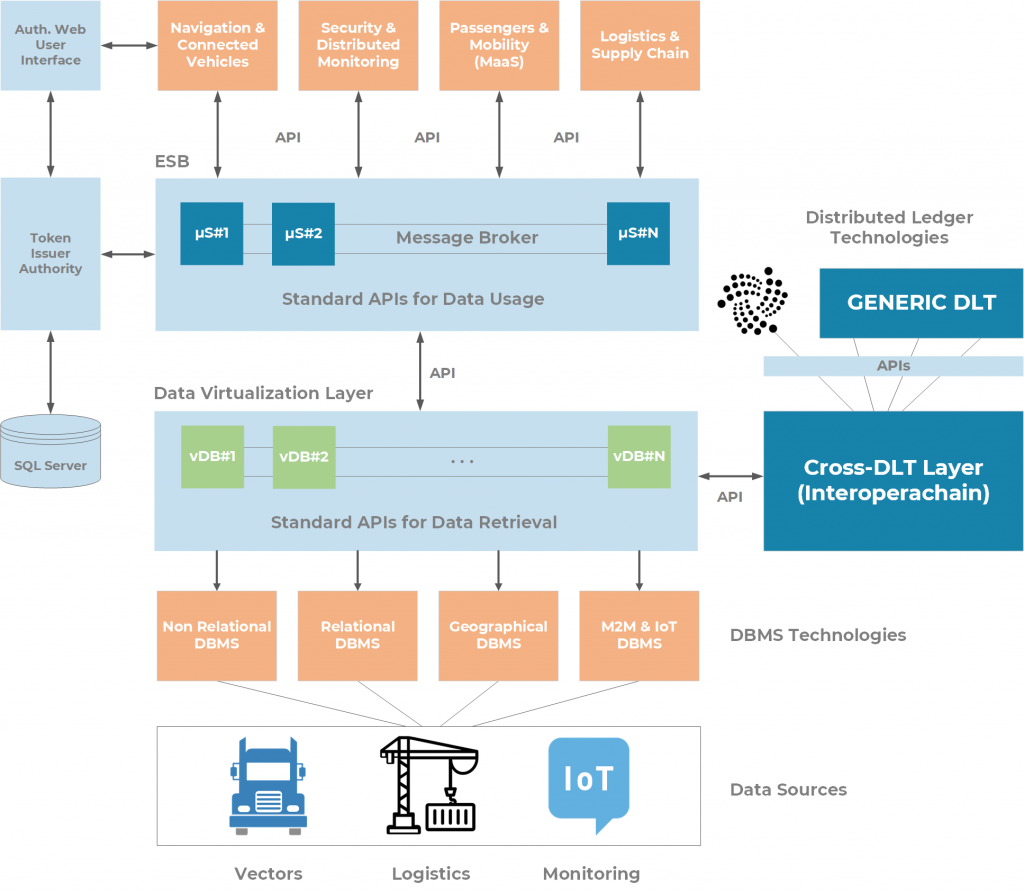

The ICT stack is structured as a private cloud with full decoupling of the three canonical layers (data, business and application), and it is appointed by the AdSP-MTS in current and future tenders and procurements. The cloud has been deployed in a local laboratory [3] within the Port of Livorno (which acts as a staging environment with allocated computational resources) to allow on-field and real-time testing and validation of the most recent functionalities, prior to the release time (see Figure 2).

The private cloud is configured as a staging environment for demo applications and according to the reference ICT stack shown in Figure 1, the demo applications are turned into funding opportunities for industries and stakeholders from the Port Community, allowing them to fill the gap from the prototype to the final product or service.

All cloud resources are built on top of the local connectivity layer of the Livorno Port, which is fully covered by a fiber optic backbone that capillary serves all terminals and gates in the port area, as depicted in Figure 2. On one hand, the fiber optic star-shaped network is allowing to connect all digital resources (i.e. sensors and actuators, network adapters, ordinary and industrial PCs, etc.) to a LAN. On the other hand, the network is mitigating the digital divide suffered by certain areas, permitting to provide Internet access to government institutions (notably the Coast Guard, the State and Fiscal Police corps and Custom Agency), through the ISP selected by the AdSP-MTS. In addition, a complementary wireless backbone is covering the maritime area with 100 Mbps Wi-Fi access points. The adoption of a standard and microservice-oriented architecture enables an agile integration of existing legacy systems into a single flexible and interoperable architecture and on the other side it enables a scalable approach for the development of new prototyping services.

Furthermore, the introduction of open-source and standard solutions such as Data Virtualization Layer (DVL) and Enterprise Service Bus (ESB) allow to define more efficient process control policies (e.g., services and data access, user management, APIs management, security, etc.) without compromising the scalability of the architecture.

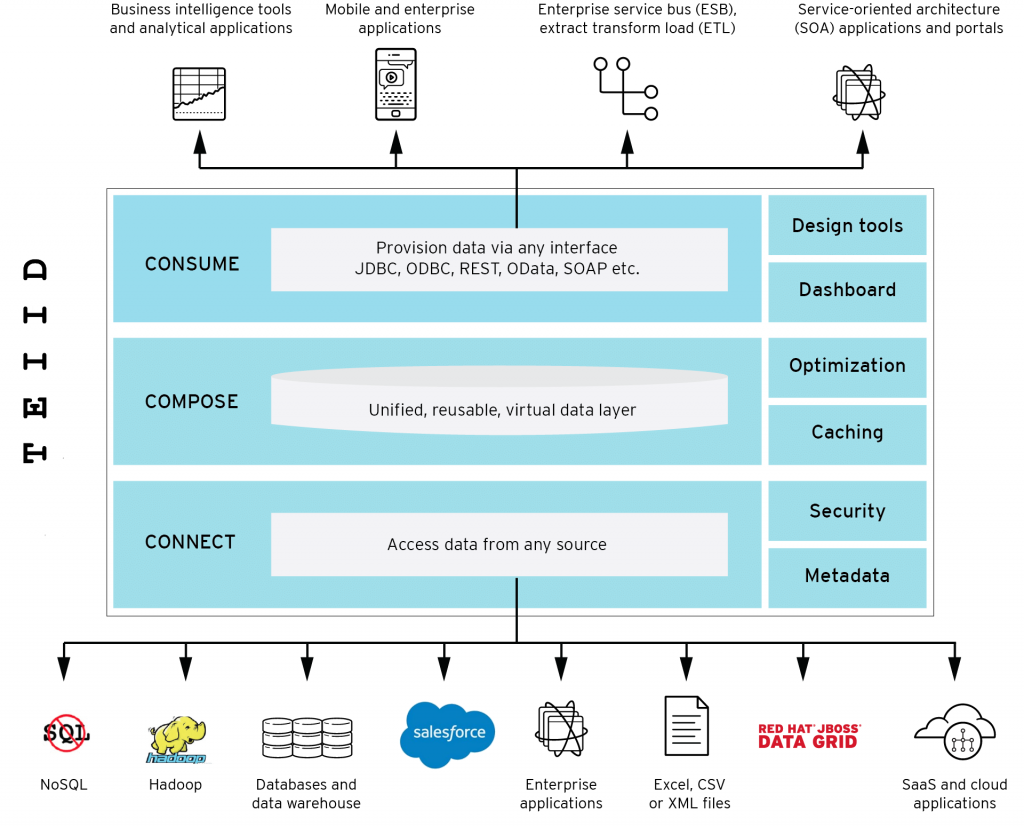

As depicted in Figure 1, MONICA Standard Platform is based on a full decoupling between the canonical layers of the three-tier architecture: data layer, business logic layer and application layer. In order to achieve this, two core components have been adopted for the ICT stack implementation: Data Virtualization Layer and Enterprise Service Bus:

- Data Virtualization Layer [4] is a cloud-native and open-source data virtualization platform enabling distributed databases, as well as multiple heterogeneous data sources, to be accessed by means of a common and standard set of APIs (e.g., JDBC, ODBC, REST, OData, SOAP, etc.). On one side, it allows aggregating data coming from disparate data sources (Livorno Port data lake), and on the other side, it permits to define a proper set of roles (according to create-read-updated-delete approach) allowing data consumers to use specific data sets (see Figure 3):

- Enterprise Service Bus [6] is an open-source, standardized and componentized middleware platform that implements typical enterprise service bus functionalities by supporting microservices’ logic development (SDK based on ASP.NET Core Framework) and APIs’ life cycle management. It secures, protects, manages, and scales API calls by intercepting API requests and applying security policies.

Finally, as part of the overall ICT stack, a cross-DLT layer (namely Interoperachain) has been adopted to allow the interoperability with most common Distributed Ledger Technologies (i.g., IOTA [7]) as well as with commercial blockchain-based platforms (e.g., Tradelens [8]), so that data immutability can be guaranteed and DLTs-native capabilities can be exploited for secure data management in the seaports domain.

iNGENIOUS – Supply Chain Ecosystem Integration scenario

From technological perspective, standard approaches for efficient and secure data management from a single access point is still missing. Full interoperability across M2M platforms still needs to be tackled on a case by case and platform by platform basis due to a wide amount of possible applications, design choices, formats and configurations that can be adopted within IoT domain. Many of available M2M solutions have been developed in the form of application silos where interoperability is limited by the scope of the solution. On the other hand, DLTs industry is completely fragmented with different alternatives: there is still lack of consistent standardization across different available DLT solutions that does not interoperate with each other and DLT’s security capabilities are not fully exploited.

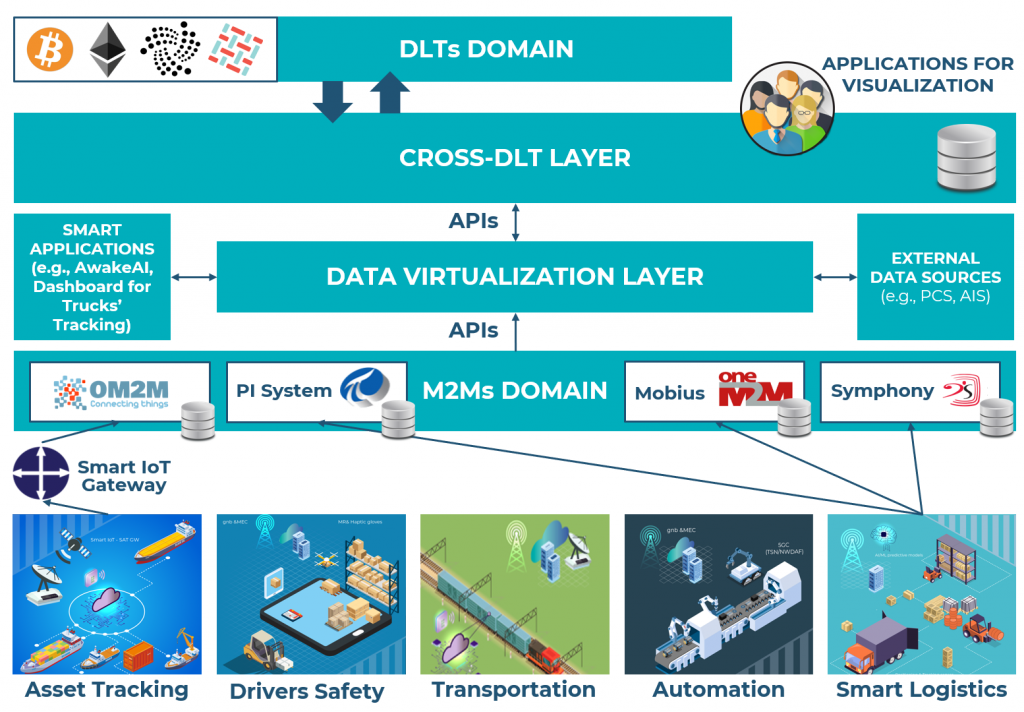

The iNGENIOUS – Supply Chain Ecosystem Integration use case [9], focuses on two different interoperable layers in order to abstract the complexity of the underlying M2M platforms (and external data sources such as the Port Community Systems) and DLT solutions on top of them, guaranteeing at the same time data privacy by means of anonymization/pseudonymization techniques (implemented at Data Virtualization Layer) and data immutability by exploiting DLTs native capabilities (see the Figure 4): Data Virtualization Layer and Cross-DLT Layer.

The iNGENIOUS – Supply Chain Ecosystem Integration scenario, based on adoption of the interoperable layer, includes the following steps as part of the use case workflow:

- Data coming from different iNGENIOUS use cases’ are used to feed different M2M platforms (Mobius OneM2M [10], Eclipse OM2M [11], Symphony [12] and PI System OSIsoft [13]): meteorological data, tracking data, container and logistics data, gate-in and gate-out data, AIS data. Each M2M platform and/or external system (e.g., Port Community System [14]) stores raw data according to its own data management policies.

- Data Virtualization Layer interacts with available M2M platforms and data sources in order to retrieve raw data, aggregate them accordingly to a common data model for the maritime events (e.g., gate-in, gate-out, vessel-arrival, vessel-departure and seal-opening) and finally made them available to the Cross-DLT Layer [15] for the ingestion to different DLTs (e.g., Bitcoin, IOTA, Ethereum and Hyperledger Fabric). In addition, Personal Data (e.g., trucks’ plate number) are automatically identified at Data Virtualization Layer so that a pseudonymization function can be applied to fulfil the GDPR requirements [16] and data analytics can be exploited by third-party applications.

- End Users (e.g., Port of Valencia Authority and Livorno Port Authority) can get access to their own data and automatically verify the proof-of-existence and data immutability by either interacting with the Cross-DLT layer or with the specific DLT (for a cross-check).

The interoperable layer could allow different stakeholders from the Port Community to manage and keep track of their own data coming from different M2M platforms, benefiting in the security capabilities provided by different DLTs. Stakeholders can take control over their own data flows and assets: from the time data are produced by IoT devices and stored within different M2M platforms to the time data are secured thanks to different DLT-based solutions.

Expected Outcomes and Impacts

While the current status of major M2M standardization activities are mainly related to the M2M device connectivity, technologies for access networks, identification and definition of data formats, standard approaches for data management and governance from a single access point are still missing. Development is limited to the system owners who understand the particular API, thus leading to high development costs and high costs for support. To overcome this challenge, it is essential to define more comprehensive standards, in particular regarding communication interfaces and data models. In addition, it is important to have a common platform for data governance that can be reused for multiple applications, avoiding the necessity to completely redesign solutions per application. In order to overcome the above mentioned challenges and barriers, the Data Virtualization Layer is adopted in iNGENIOUS project as a good alternative for the cross-M2M interoperability. As a matter of fact, it enables a centralized approach for data access, exchange and management (including privacy and security aspects) between heterogeneous M2M platforms and DLT solutions on top, abstracting their complexity to the final user.

In order to quantify the potential of the proposed solution, the following societal, business and technological impacts are identified and briefly summarized below (further assessment is expected to be performed during the life time of the iNGENIOUS project):

- iNGENIOUS Interoperable Layer can support data exchange between different stakeholders from the Port Community (e.g., Port Authorities, Container Terminal Operators, Freight Forwarders, Institutional Bodies, Shippers, Carriers, Maritime Agencies, .), by interconnecting and providing them with a way of governing their data in every digital network (societal impacts).

- External and third-party organizations and companies will spend less time on building and managing data integration processes for connecting distributed data sources, benefiting in terms of costs and time savings (business impacts).

- iNGENIOUS Interoperable Layer enables a virtual federation of different IoT/M2M platforms (adopting Data Virtualization Layer component) overcoming compatibility issues between standard and non-standard solutions. The usage of Data Virtualization Layer and Cross-DLT Layer, allows to have a common interface either to interact with most common DLT solutions for data immutability proofs and to consume data coming from the underlying IoT domain (technological impacts).

References

[2] https://www.mdpi.com/1424-8220/22/1/246

[3] https://jlab-ports.cnit.it/

[5] https://teiid.io/teiid_wildfly/

[6] https://wso2.com/api-manager/

[7] https://ieeexplore.ieee.org/document/8690769?signout=success

[8] https://www.tradelens.com/

[9] https://ingenious-iot.eu/web/use-cases/

[10] https://github.com/IoTKETI/oneM2MBrowser

[11] https://www.eclipse.org/om2m/

[12] https://www.nextworks.it/it/prodotti/symphony

[13] https://www.osisoft.com/industries/transportation/ports

[14] https://tpcs.tpcs.eu/login-en.aspx